Who will program manage the program managers?

The case for training the people who allocate billions in R&D

Imagine you’re 12 months into leading a three-year research program at ARPA-H. You have seven teams working on various components of a shared technical challenge; among them, two are ahead of schedule, one seems to have hit a wall but the principal investigator is undeterred, and one has pivoted to something more promising than what they originally proposed but it’s technically outside of the program’s core scope. You need to decide how to allocate the next tranche of $5 million across these teams. There is no study section or review panel. You can seek outside perspectives, but at the end of the day it’s your call to make, and your decision will shape when (or whether) the technical breakthrough you’re aiming for is achieved.

This is a relatively normal week for an ARPA1 program manager. But few people who walk into this role have received any formal preparation for it.

A few weeks ago in our “What we’re reading this week”, we highlighted initiatives from two grantees — Speculative Technologies’ Brains Accelerator, and Renaissance Philanthropy’s Big if True Science (BiTS) Accelerator — that are trying to change that. These programs identify ambitious scientists with ideas for coordinated research programs,2 work with them over a few months to refine their scientific vision and planned approach for program implementation, and then help them find a role with (or funding from) federal agencies, philanthropies, or research incubators that are seeking talent.

So, essentially, programs to improve the quality of program managers’ management.

This seems like a pretty meta thing to fund, even for a program that specializes in metascience. But we can decompose the theory of these efforts’ value into four more tangible claims:

There are efficiency gains to be had in how we manage and allocate scientific resources.

Coordinated research programs are a high-leverage context for intervening in R&D resource allocation.

Graduates of training and accelerator programs are likely to make higher quality management and resource allocation decisions than they3 would have otherwise.

Graduates of these programs are reasonably likely to actually end up in charge of substantial, high-leverage R&D resources.

Are there efficiency gains to be had in scientific resource allocation?

If you’re tempted to just say “yes” and move along, stick with me. It’s true that there’s been a lot of conversation about the inefficiencies of our modern scientific ecosystem: a lack of risk-taking, homogeneity in the structure of scientific institutions, biases in what gets published, etc. These dynamics suggest that Things Could Be BetterTM. But they don’t necessarily suggest that changes in management and resource allocation, within existing scientific frameworks and institutions, could make things better.

Let’s look at two papers that provide some evidence that this is likely to be the case.

The first is Azoulay, Graff Zivin, and Manso (2011), which compares scientists funded by the Howard Hughes Medical Institute (HHMI) — which provides long-term, unrestricted support to individual researchers — to comparable NIH-funded scientists. They find that HHMI-funded scientists produce considerably more high-impact work, suggesting the mechanism of resource allocation that is used by funders can meaningfully affect the amount and quality of scientific output, even without changing the total quantity of resources.

The second is a new paper by Bertolotti, Myers, and Tham (2025), which takes a more holistic view, ambitiously attempting to measure the overall misallocation of resources as a function of productivity across the U.S. scientific landscape. They develop a survey-based method for estimating individual researchers’ productivity by using hypothetical salary-for-{resources/time} tradeoffs, and find that the distribution of scientific productivity is extremely skewed: the 90% percentile researcher is roughly 30 times more productive than the 10th percentile. Importantly, they show that current resource allocations don’t track this distribution well. Their counterfactuals suggest that more efficient allocation of existing resources could produce the same increase in scientific output as raising the federal science budget by billions of dollars.

It’s incredibly difficult, however, to predict the impact and potential outcomes of resource allocation from observables. That means that the judgement, taste, and expertise of people making allocation decisions is critical, and any mechanism that helps improve the quality of those decisions can have very large returns.

Are coordinated research programs uniquely high-leverage?

In terms of leverage for improving R&D resource allocation, ARPA-style agencies (and similar coordinated research programs) are a unique opportunity.

Azoulay, Fuchs, Goldstein, and Kearney (2019) lay out the core features of the ARPA model, highlighting in particular the importance of the empowered program manager (a.k.a. program director). PMs identify technology directions, create programs, select performers, assemble and reshape research teams, and actively manage portfolios. As they put it, “hiring talented program staff with a penchant for exploration is pivotal to the success of ARPA‑like programs.” Goldstein and Kearney (2020) provide a view into what this active management looks like in practice. Using confidential ARPA-E program data, they show that program managers routinely modify the terms of projects, reallocate resources, and make go/no-go calls throughout a program’s lifecycle.

This reliance on individual, skilled decision-makers makes these agencies high-tractability targets for improving decision quality. At NIH, allocation decisions are distributed across thousands of reviewers and study sections. At DARPA, roughly 100 program managers control $3-4 billion per year, meaning that improving one PM’s judgement potentially affects tens of millions of dollars in R&D spending. And because that spending is largely discretionary rather than process-driven, training and improved judgement can more easily flow to decisions and outcomes.

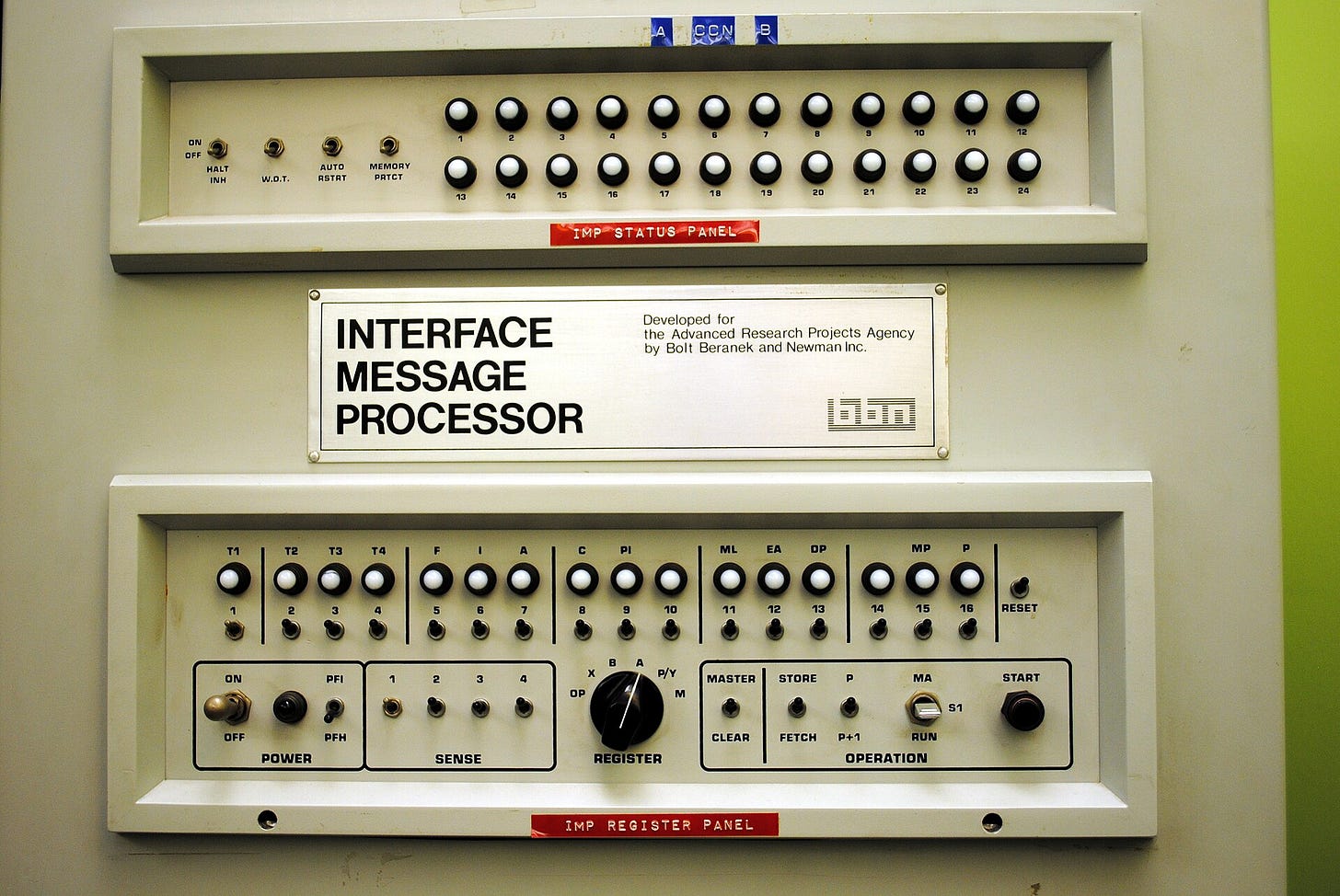

So coordinated research programs are great places to intervene in terms of tractability. What about in terms of the impact of the scientific outcomes? Anecdotally, they’re great on this as well! If you’re one of the loyal innovation policy stans following this blog, you can probably rattle off a few of DARPA’s historical wins (the internet! autonomous vehicles! GPS!). But you can do something similar for NSF (also the internet! MRI! gravitational waves! AI!), so how can we actually tell how per-dollar outcomes compare?

Quantitatively, it’s difficult. Getting apples-to-apples comparisons across institutional designs is not straightforward, and much of the excellent research on the topic of ARPAs is historical and qualitative rather than empirical.

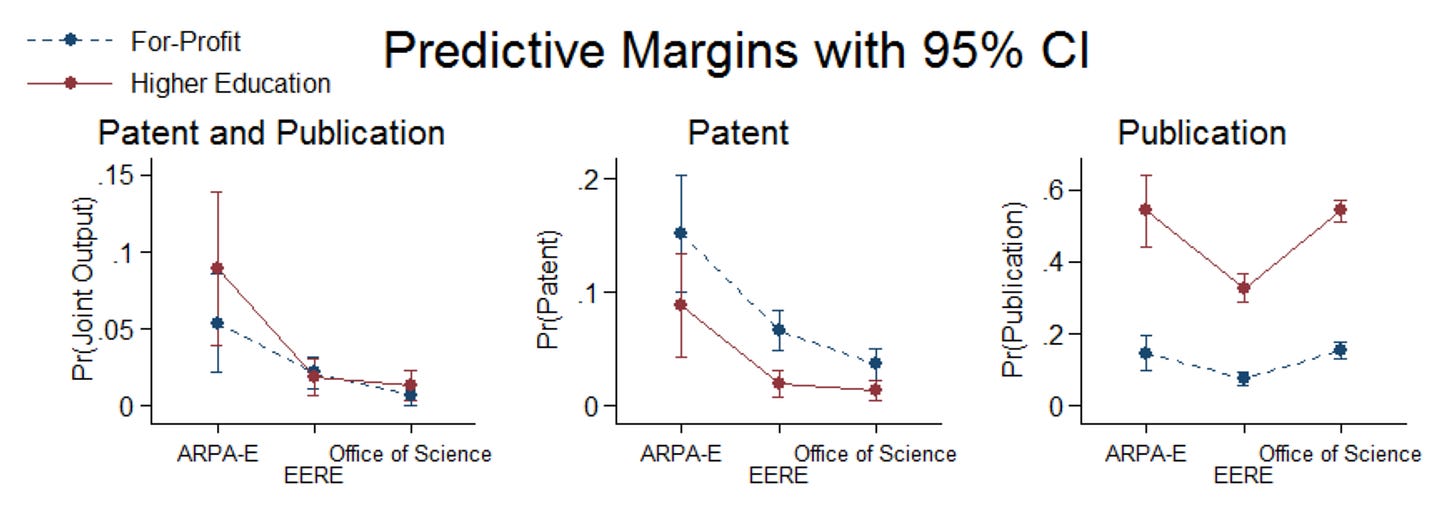

Fortunately, in 2018, Anna Goldstein and Venkatesh Narayanamurti published a study comparing patenting and publication outcomes from ARPA-E grantees to those from the DOE’s Office of Energy Efficiency and Renewable Energy (EERE) and Office of Science (OS), which fund “applied research, development, demonstration and deployment activities” and “basic research programs”, respectively. Across a range of outcomes, they find that ARPA-E grants:

lead to more technological output per dollar than EERE or OS (e.g. number of patents, at least one cited patent);

lead to more scientific output than EERE, but are roughly on par with OS (e.g. number of publications, at least one highly cited publication);

and are substantially more likely to produce both technological and scientific output than EERE or OS (e.g. at least one patent and at least one publication).4

A back-of-the-envelope synthesis of the paper’s results across comparison office, performer type, and outcome suggests that ARPA-E generated roughly 3x as much observable paper-and-patent output per dollar as traditional DOE grantmaking.5 Importantly, this includes a selection effect: at the time, ARPA-E’s budget was significantly smaller than either comparator agency, and as a result it could be more selective. Depending on how you frame the counterfactual, this could be a feature or a bug of the comparison, but in any case, a conservative adjustment comparing only within the same budget window of top-performing grants still finds a 1.3x output advantage for ARPA-E.6 With the necessary caveats about papers and patents, these findings are noteworthy in that they suggest that the model itself (even controlling for performer type and selection) is meaningful.

Does training actually help?

Here the evidence is more sparse. We don’t have a great sense of how effective these types of programs will be at improving eventual resource allocation and use.7 But we do have some broader evidence on management training that is consistently positive, and some spillover benefits from programs like these that suggest additional value above and beyond generic training.

The first place to look is the broader management training literature. A classic paper here is Bloom et al. (2013), which randomly provided management consulting to Indian textile firms, and found that it raised productivity by 17% in the first year, with effects persisting and in some cases growing over time. Giorcelli (2019) finds lasting benefits of management training on firm performance in a very different setting (Italian firms sending managers to train with US companies under the Marshall Plan’s Productivity Program), and Giorcelli (2024) finds that a WWII-era MBA-style program had significant benefits for individual performance and career outcomes. Meta-analyses (e.g. Busso, Park, and Irazoque, 2023) find that management training has productivity benefits in the 5-10% range.

A somewhat tangential literature on venture capital and private equity funds looks at the returns to experience rather than training. For example, the “first-fund” penalty in PE/VC seems to be on the order of 10% with respect to performance (Kaplan and Schoar, 2005), and more experienced VCs are better able to identify and invest in unproven talent (Gompers, Kovner, Lerner, and Scharfstein, 2006). To the extent that training can compress some of the learning curve, giving first-time PMs exposure to the tacit knowledge, strategies, and networks that typically only accumulate through experience, these benefits may apply.

The link to our context is admittedly tenuous (textile firms, postwar Italian manufacturers, and VC funds are not ARPA programs), but this literature is helpful for calibrating reasonable effect sizes; if we observed a similar 5-10% improvement in our context, it would be as if graduates’ $50m ARPA programs got an extra $3+ million-worth of scientific outcomes for essentially free.8

And importantly, accelerators don’t just train, they also select and place. PM roles are incredibly unique within the scientific ecosystem, are often difficult to hire for, and are high risk if the wrong person is selected. As a result, the identification and matchmaking that these types of programs provide can be a high-leverage activity in itself. This may be an even more important factor than management training if the literature is to be believed; Burks, Cowgill, Hoffman, and Housman (2015) study referral hiring, and find that referred workers in high-tech roles produce 19% more citation-weighted patents than non-referred workers.

While the state of the evidence on this question is currently middling, there seems to be growing interest in it. The Scientific Labs Management Project, for example, is applying the World Management Survey methodology to scientific labs, aiming to systematically measure the quality of organizational practices as a first step toward identifying where management training could help most. Results aren’t out yet, but the project’s existence signals recognition of scientific management as an under-studied bottleneck. Until then, we think the circumstantial case is relatively strong, and would be excited to see more rigorous research and evaluation.

Will graduates be put in charge?

Early signs point to yes! From the first BRAINS cohort of 16 fellows in 2024, several have already taken steps towards research leadership roles: two are now running Focused Research Organizations (FROs), another is leading a program at a philanthropic foundation, and others have entered hiring pipelines at various ARPA-style agencies. In aggregate, Speculative Technologies reports that first-cohort fellows have collectively secured more than $70 million in committed funding from philanthropists and governments. The program has since expanded to a 2025 AI-focused cohort and a 2026 cohort.

On the BiTS side, the program has scaled rapidly since its late 2024 announcement. Renaissance Philanthropy has now launched several cohorts in direct partnership with government innovation agencies: a UK cohort in partnership with ARIA,9 an EU cohort supported by SPRIND, and a Japan cohort as part of the Cabinet Office’s International Research Program. Several BiTS fellows are now in, or in the pipeline for, PM roles at ARIA and SPRIN-D, and others are in the process of setting up coordinated research programs outside of government.

The long-term vision for these efforts is even more ambitious than the impacts discussed above; ideally, these programs would raise the ambition of the scientific ecosystem, bringing more philanthropic resources into frontier science and inspiring the creation of new ARPA-style initiatives. Ben Reinhardt, CEO of Speculative Technologies, put it well, saying, “Over time, we’re hoping to do for ARPA programs, FROs, and other coordinated research programs what YC and other accelerators did for startups. Starting and working at startups went from an obscure pursuit… to a normalized (if still high-variance) path for people all over the world.” We don’t know yet whether that vision will be realized, but the early trajectory is exciting.

While some of the funding bodies that are relevant to this piece are literally “Advanced Research Projects Agencies” (ARPAs) – e.g. DARPA, ARPA-H, ARPA-E, and IARPA – I use this term broadly to refer to organizations that follow the model set forth by DARPA, which includes international agencies like ARIA and SPRIN-D and arguably some private initiatives that host or incubate coordinated research programs.

What are coordinated research programs? In short, large-scale efforts, typically run by a single leader or small group, organizing work across multiple teams or technical workstreams towards a precise goal. If that sounds nebulous, that’s because it is. DARPA programs are a common and relatively legible example of the typology.

Or counterfactual counterparts in the role.

This difference is particularly stark, and is potentially evidence for the idea that ARPA-style programs fill a technoscientific niche that is undersupplied in the rest of the ecosystem.

This represents an average across the estimates from Goldstein and Narayanamurti’s controlled regressions on patent and publication outcomes, weighted by the performer mix of the comparison offices.

This adjustment uses the paper's budget-matched results in Table A15 (which limit the traditional-agency samples to their top-performing grants within ARPA-E's total budget window and re-run one of the regressions) to estimate a shade-down from overall effects to budget-matched effects. Applying this shade-down to the overall unmatched benefit yields a roughly 1.3x output advantage.

If you want to help fill this knowledge gap, send me an email!

These programs do, in fact, cost money to run – but comparatively little relative to the potential upside. If you’re a funder and want to learn more, please do reach out.

The ARIA partnership is particularly notable, as it was explicitly designed to support ARIA's incoming programme directors.